If you are currently evaluating how to scale your regional content strategy in 2026, you are likely caught in a frustrating bind. On one hand, you need to rapidly generate hundreds of hyper-localized service pages and regional assets to capture search market share. On the other, feeding your proprietary business data, local customer insights, and internal PDFs into cloud-based AI tools like ChatGPT or Claude has become a massive liability.

You aren't alone in this hesitation. Following a staggering 42% increase in AI-related enterprise data leaks over the last year, decision-makers are actively moving away from broad cloud-based LLMs. The conversation has shifted entirely. It is no longer just about how fast you can produce content; it is about establishing a "Private-First" AI strategy that prioritizes ethical compliance, data security, and hyper-regional semantic accuracy.

To dominate local search today, you need a "Security-Ethics Double-Play." This framework bridges the gap between the technical execution of local AI tools and the strict ethical compliance required to maintain Google's E-E-A-T standards. Let's break down exactly how you can operationalize this approach.

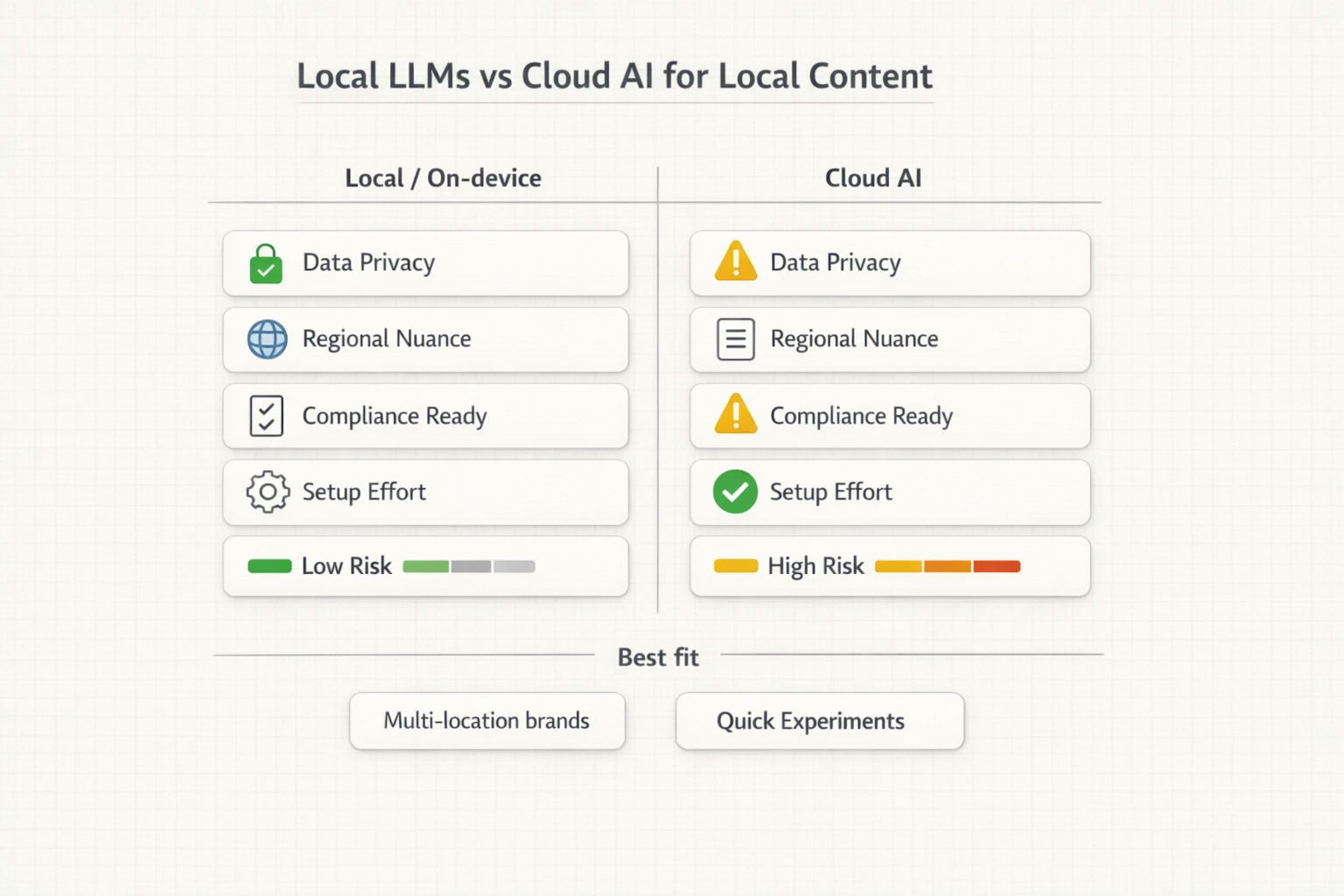

When you rely on public cloud AI to process regional market data or summarize internal PDFs, you are inadvertently training your competitors' future models. For businesses that operate across multiple local markets, the nuances of your regional strategy—your localized pricing, market-specific pain points, and proprietary service delivery methods—are highly confidential.

This is where the transition to "On-device AI" or Local LLMs (like PrivateGPT, LM Studio, and GPT4All) has become the gold standard for enterprise marketing teams. By running these models entirely within your own closed environment, you eliminate cloud exposure. Processing proprietary assets locally ensures your regional marketing data never leaves your servers.

If you haven't yet assessed where your team might be leaking data through unauthorized cloud AI usage, conducting a thorough enterprise seo audit that includes a review of your AI workflows should be your immediate next step.

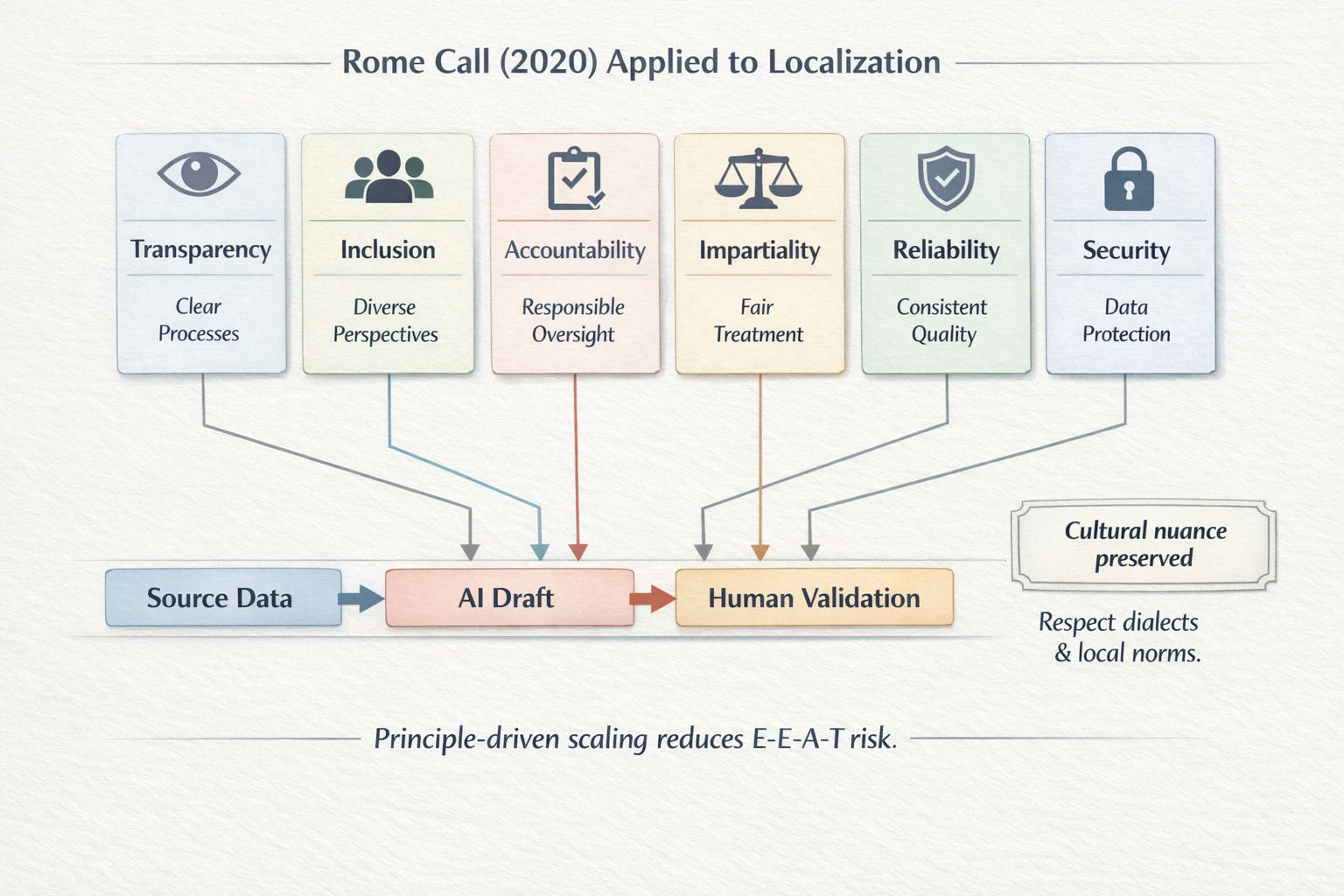

For an AI scaling strategy to be sustainable in 2026, it must adhere to established ethical frameworks. The Rome Call for AI Ethics, officially established on February 28, 2020, introduced six foundational principles of "Algorethics": Transparency, Inclusion, Accountability, Impartiality, Reliability, and Security.

While these originated as high-level corporate governance concepts, today’s top-performing SEO strategies use them as operational blueprints for content localization.

Consider the principle of Inclusion. Broad cloud AI tools are notorious for delivering generic translations that strip away regional character. When scaling content across borders or distinct regional markets, applying the Inclusion principle means specifically training your local LLM on regional dialects, colloquialisms, and semantic variations. This hyper-local semantic optimization ensures your content resonates authentically with local buyers rather than reading like a sanitized corporate translation.

When you map out geographic keywords and feed them into a secure, localized LLM, the output becomes significantly more nuanced. It reflects the cultural preservation that users actually search for. This rigorous attention to local relevance is the exact same philosophy that drives successful multilingual link building campaigns—focusing on in-market cultural accuracy rather than sheer volume.

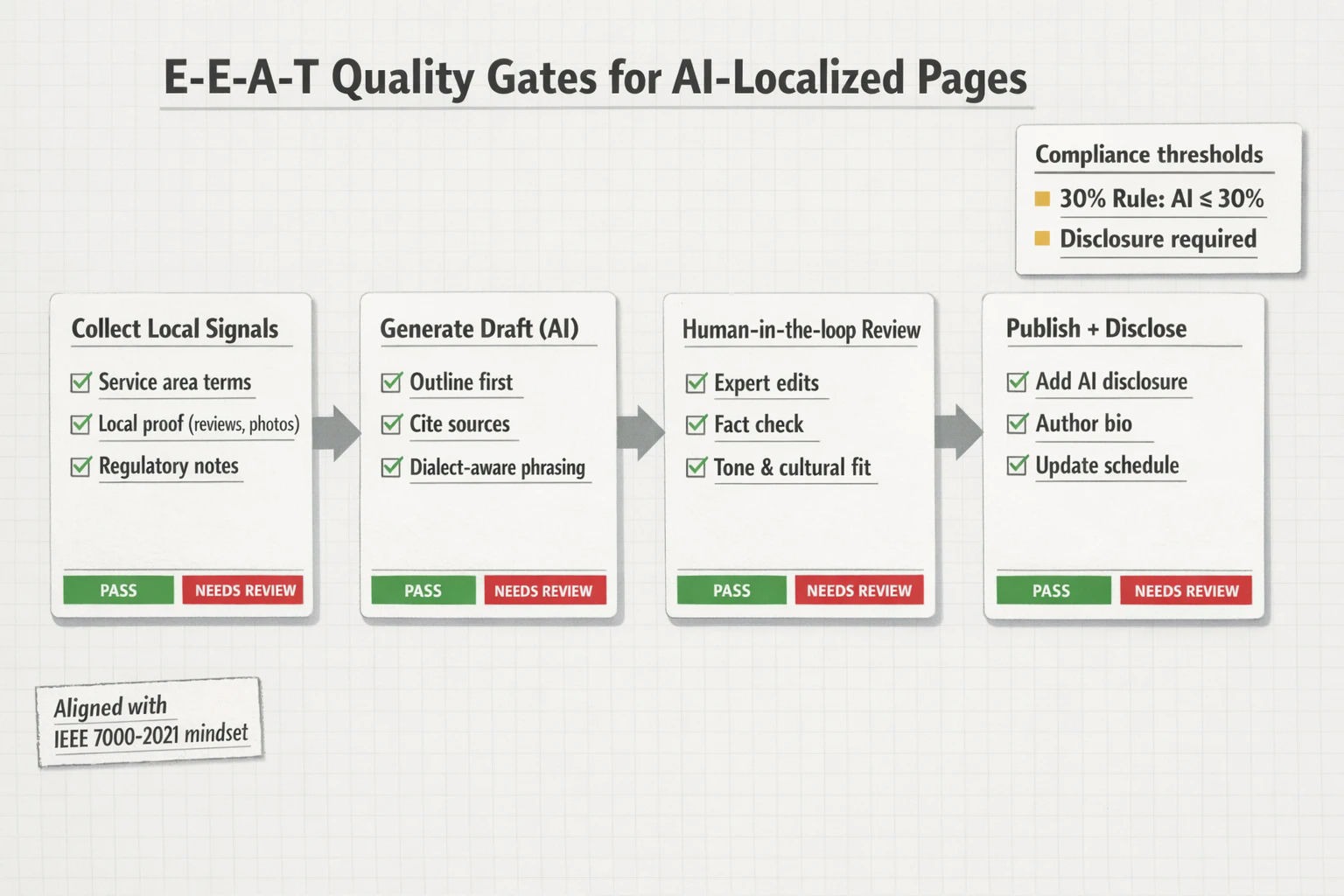

As AI generation scales, search engines and institutional bodies have cracked down heavily on unverified, mass-produced content. A critical standard that has emerged from academic and professional bodies, including guidelines aligned with IEEE standards, is the 30% Rule.

This guideline dictates that AI-generated text should not exceed 30% of a final published work if it is to maintain its "originality" and "integrity." The remaining 70% must consist of proprietary insights, localized data, human-authored subject matter expertise, and bespoke research.

Furthermore, adhering to IEEE 7000-2021 standards requires a "Human-in-the-loop" validation process. You cannot simply hit "generate" and publish. Your workflow must incorporate expert review to address ethical concerns, verify factual accuracy, and integrate the proprietary voice of your brand.

WEBP

A question we frequently hear from teams evaluating this transition is: does ai content rank in google consistently? The answer is a definitive yes—but only when governed by stringent quality assurance and E-E-A-T (Experience, Expertise, Authoritativeness, and Trustworthiness) protocols.

To protect your rankings, you must blend AI efficiency with human authority. AI is exceptional at building outlines from local PDFs and formatting data, but it lacks genuine "Experience."

When deploying local service pages, human reviewers must inject real-world case studies, local client testimonials, and staff credentials. This combination creates highly authoritative seo driven content that naturally earns backlinks and builds true page authority. Integrating a strict QA process—much like an 18-point checklist used for evaluating high-quality backlink prospects—ensures that every generated page adds unique value to the search ecosystem.

Transitioning to local AI tools like PrivateGPT requires intentional infrastructure planning. Unlike cloud solutions that run on someone else's servers, local LLMs utilize your own hardware. For marketing agencies and enterprise SEO teams, this means equipping your workspace with machines capable of running robust parameter models efficiently.

Typically, a modern setup for local PDF summarization and content generation requires hardware with dedicated neural processing units or high-end GPUs to prevent bottlenecks. When configured correctly, this localized infrastructure allows you to process thousands of localized data points without a single packet of information leaving your firewall.

Many organizations find that outsourcing this highly technical workflow to specialized partners is more efficient than building the infrastructure from scratch. Just as link building for seo agencies requires dedicated systems and established publisher relationships, ethical AI localization requires specialized setups that blend technical SEO with advanced data security. This is a critical component of modern generative engine optimization, ensuring your brand is cited accurately by AI answer engines while your underlying data remains secure.

The key is adhering to the 30% Rule and utilizing a comprehensive seo checklist for blog posts to mandate human review. Your local LLM should be used primarily for data structuring, PDF summarization, and outlining. Human experts must write the localized insights, ensuring the final asset demonstrates genuine E-E-A-T.

Yes, and in many ways, they do it better than generalized cloud AI. Because you can fine-tune local models on your proprietary regional data and localized documents, they can be highly specific to regional dialects, adhering to the Rome Call's principle of Inclusion.

Under IEEE 7000-2021 guidelines and the Rome Call's Transparency principle, disclosure is highly recommended. Implementing a standardized "IEEE Author Disclosure Template" or a simple transparency snippet in your footer builds trust with your audience and satisfies institutional guidelines regarding algorithmic transparency.

For businesses handling sensitive client data, the risk mitigation alone justifies the cost. A single data leak from feeding proprietary PDFs into a cloud LLM can cause irreparable brand damage. However, if building internal infrastructure isn't feasible, partnering with an SEO firm that already utilizes private-first AI workflows is a scalable alternative.

Scaling your local content strategy doesn't mean you have to compromise on data privacy or search quality. By adopting a private-first AI workflow that honors the Rome Call principles and the 30% rule, you protect your proprietary data while dominating regional search results.

The evaluation phase of your journey is the perfect time to audit your current content operations. Take a hard look at where your data is flowing, how much human oversight is built into your publishing process, and whether your regional pages truly reflect local semantic nuances. When you prioritize rigorous quality assurance and ethical AI integration, you build a sustainable foundation that search engines and users will consistently reward.