Remember when getting to the top of a search results page simply meant making sure your target keyword appeared exactly five times in your content?

We’ve come a long way since then. As we navigate the digital landscape of 2026, search algorithms no longer just "read" text—they comprehend it. Today, a staggering 82.5% of Google's AI Overview citations link to deep, specialized content pages rather than generic, surface-level homepages.

For online businesses, affiliates, and digital marketers, this represents a massive paradigm shift. AI-driven search models prioritize deep, semantically rich, and genuinely helpful content. To become an indispensable resource in your niche, you have to understand exactly how AI algorithms evaluate the quality of a page before they ever decide to link to it or cite it.

"Deep content" is material that goes beyond surface-level definitions. It answers the immediate question a user has, anticipates their next three questions, and provides unique, experience-based insights that generative AI simply cannot hallucinate.

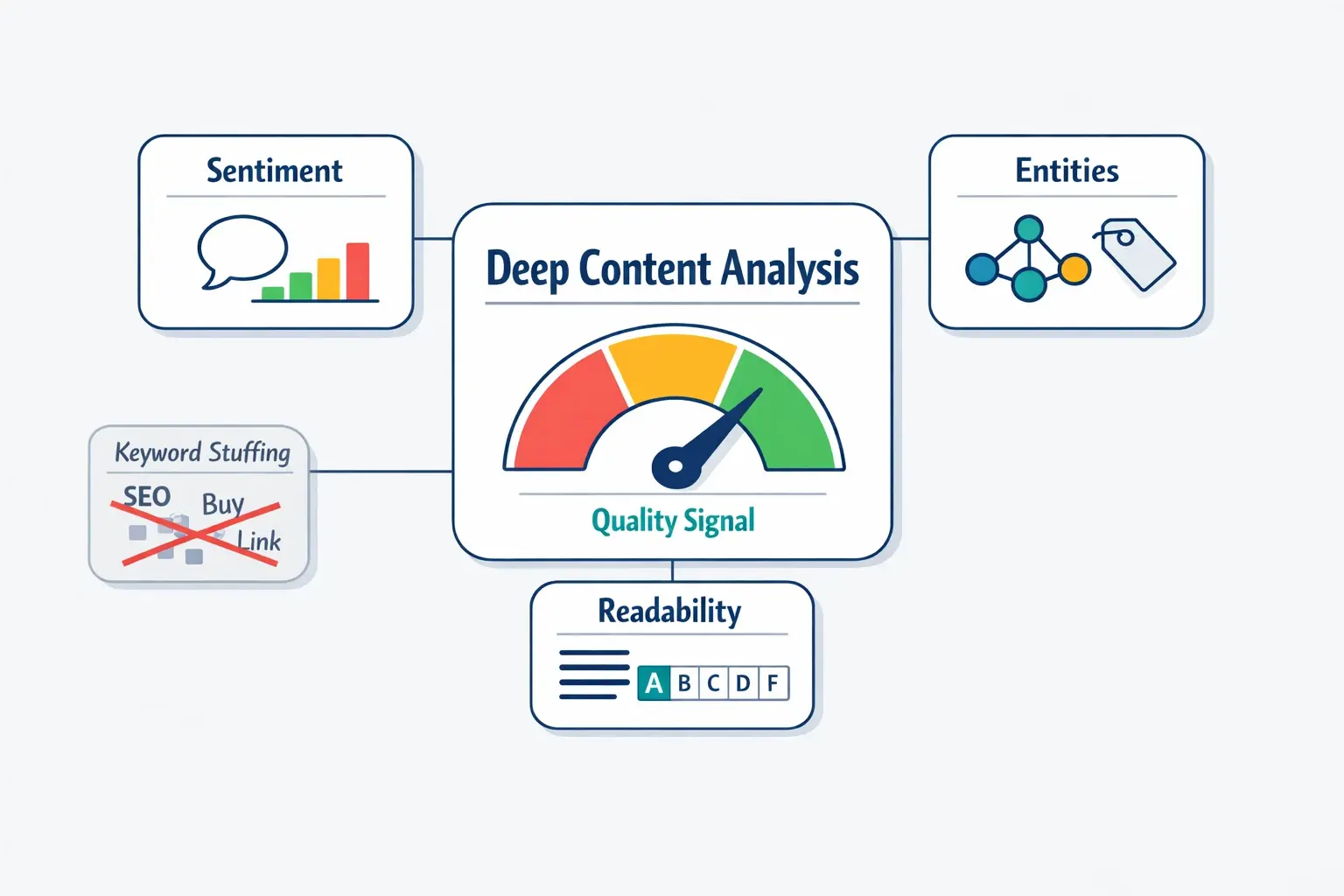

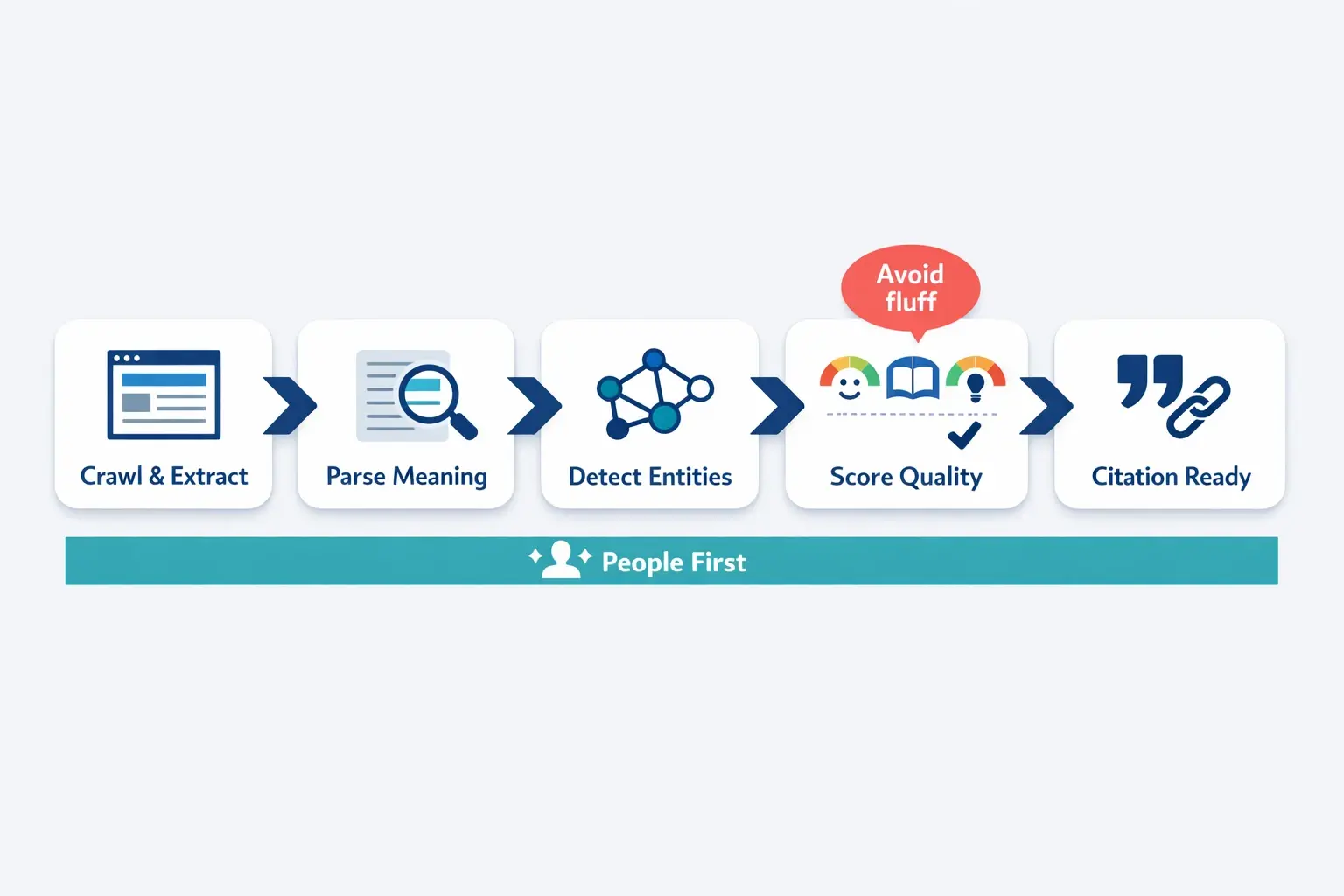

When AI algorithms evaluate a potential linking page today, they aren't scanning for a primary keyword. Instead, they are conducting a deep content analysis. They are trying to verify if your content aligns with Google’s core philosophy: "original, helpful content written by people, for people."

To do this, AI models break your content down into structural data points, evaluating three core signals: sentiment, entities, and readability.

If you want your website to earn authoritative citations from AI overviews, you need to look at your pages the way Natural Language Processing (NLP) models do.

Sentiment analysis is how AI understands the tone, emotion, and context behind your words. It’s no longer binary (positive vs. negative). Advanced AI evaluates the nuance of sentiment to gauge genuine experience and expertise.

For example, a generic review might say: "This software is good." A deep content review says: "While the software excels in rapid data processing, its reporting dashboard lacks the customization required for enterprise-level use."

The second sentence provides a balanced, highly specific sentiment that signals to the AI: This author has actual, hands-on experience (E-E-A-T).

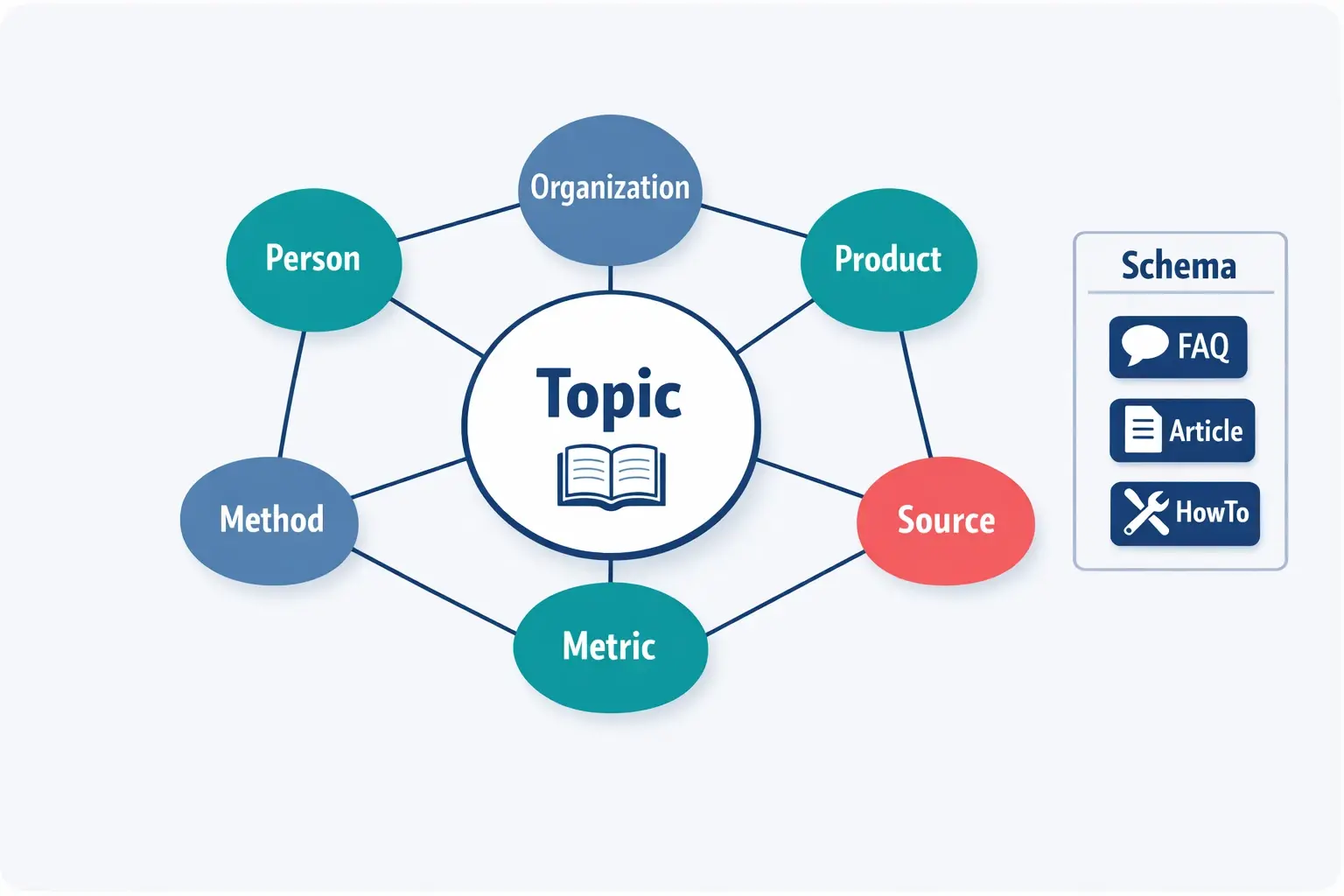

Named Entity Recognition (NER) is how AI identifies and categorizes the "things" in your content—people, places, organizations, and concepts.

If you write an article about "Apple," the AI needs to know if you mean the fruit or the trillion-dollar tech company. By surrounding the word "Apple" with related entities like "Tim Cook," "iPhone," and "Silicon Valley," you create a localized knowledge graph. The denser and more accurate your entity relationships are, the higher your content's linking page quality score becomes.

A common misconception is that complex language sounds more authoritative. In reality, AI favors clarity.

Readability scores measure how easily a user can digest your content. If an AI summary engine struggles to parse your sprawling, 40-word sentences filled with industry jargon, it won't cite you. High-quality linking pages balance expert-level insights with simple, concise delivery.

None of these AI tools operate in isolation. They are unified under the umbrella of Semantic SEO.

Semantic SEO shifts the focus away from isolated search terms and places it entirely on topical depth and search intent. When you run a comprehensive enterprise seo audit, one of the first things you'll look for is whether your website clearly communicates the relationship between different topics to search engines.

How do you build this semantic foundation?

By mastering these elements, you effectively step into the realm of generative engine optimization, proactively structuring your site so AI systems naturally want to feature it.

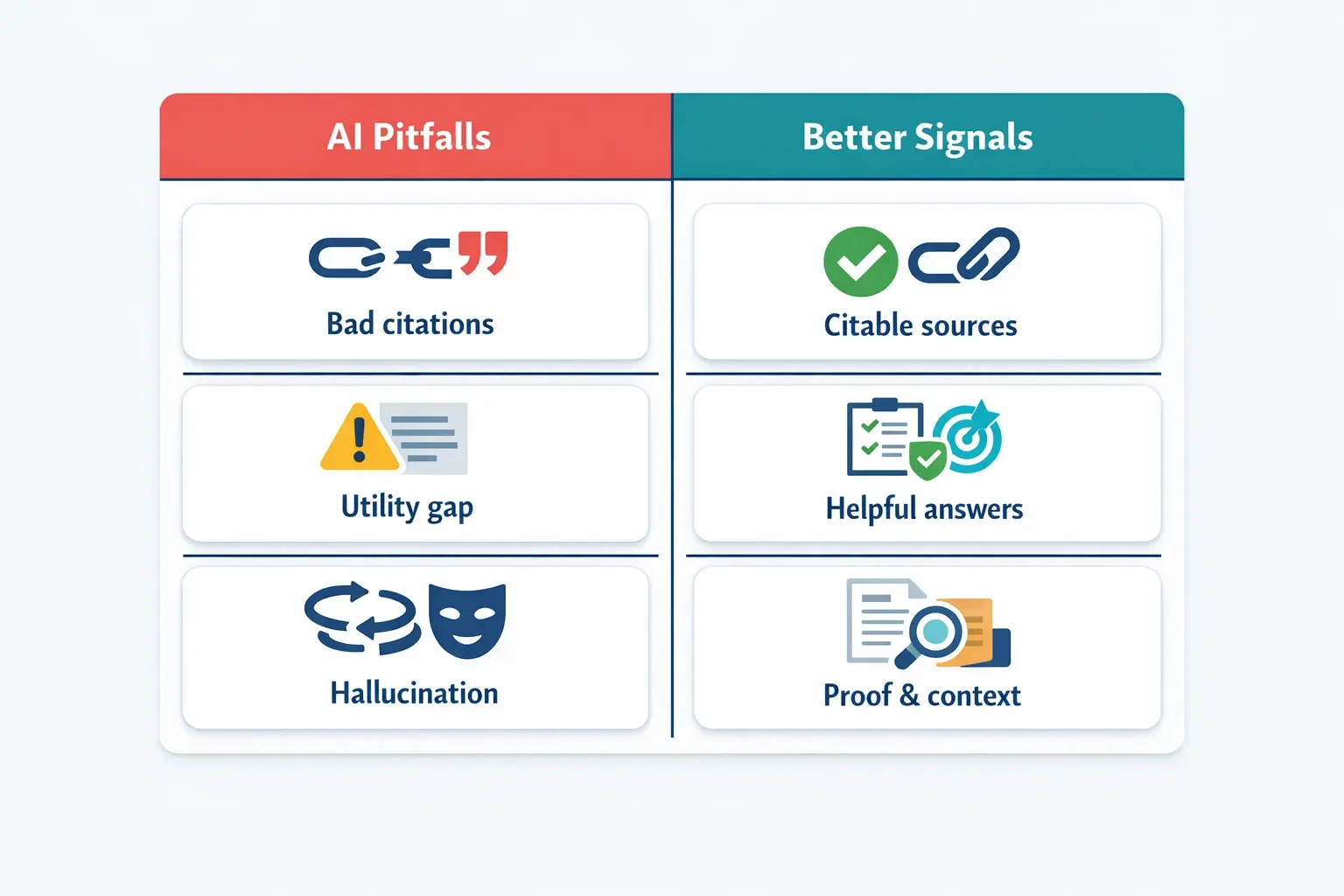

It’s easy to assume that if you write a decent article, AI will automatically find it and cite it. But in 2026, we frequently see great content fail because of the "Utility Gap." This happens when content is beautifully written for humans but lacks the structured entities and clear answers that AI parsers require.

Furthermore, out of desperation to regain lost traffic, some webmasters resort to manipulating these algorithms. Be warned: if you find yourself engaging in black-hat seo tactics like creating fake AI-generated entity networks or spamming schema markup, modern AI search engines will identify and heavily penalize your site. The algorithms are now trained to detect E-E-A-T discrepancies and logical hallucinations.

Before you hit publish on your next piece of content, use this checklist to ensure you are meeting the demands of deep content analysis:

To make sure your content creation team is aligned with these new standards, we highly recommend integrating a dedicated seo checklist for blog posts and keeping a technical onsite seo checklist handy for your developers.

Deep content analysis refers to how modern AI search engines evaluate a webpage's quality by looking past keyword matching. The AI assesses semantic richness, the density of recognized entities (people, places, things), the nuance of sentiment (which demonstrates real human experience), and the overall readability to determine if a page provides true value to a user.

Traditional algorithms leaned heavily on backlinks and keyword placement. Today's Google AI evaluates the "usefulness" of a page based on E-E-A-T (Experience, Expertise, Authoritativeness, and Trustworthiness). It uses Natural Language Processing to verify if the content answers intent, offers original insights, and connects logically to known facts (entity graphs) before it considers the page a high-quality link source.

Absolutely not. This is a common misconception. AI generates content based on existing data; it cannot generate new lived experiences or unique human insights. Deep content analysis algorithms are specifically designed to filter out generic AI-generated fluff and highlight content that showcases genuine, human-first expertise.

Start by structuring your content clearly with targeted headings and FAQ sections. Use plain, accessible language (high readability). Ensure you are thoroughly covering the semantic entities related to your topic, and back up your claims with original data or personal experience to display a clear, authoritative sentiment.

The shift from keyword hunting to semantic entity mapping doesn't have to be overwhelming. By understanding that AI simply wants to serve the most helpful, well-structured, and deeply analyzed content to users, you can begin to transform your website from a collection of pages into a connected, authoritative knowledge base.

As you audit your current content strategy, look for the gaps where you can replace surface-level writing with deep, experience-driven insights. The algorithms are reading closer than ever before—make sure what you’re giving them is worth citing.