If you’ve been watching your website analytics over the past few years, you might have noticed a profound shift. The traditional playbook of publishing decent content, building a few links, and watching the organic traffic roll in simply doesn't work the way it used to. As we navigate the digital landscape of 2026, generative AI platforms like Google AI Overviews, ChatGPT, and Perplexity have fundamentally changed how users find information.

We are no longer just optimizing for ten blue links. We are optimizing to be the cited source in an AI's conversational response.

This shift has introduced a massive new gatekeeper into the marketing world: Algorithmic Trust Scoring. AI algorithms don’t just read your content; they ruthlessly evaluate your brand's genuine authority and trustworthiness. They are looking beyond traditional SEO metrics to analyze a complex web of E-E-A-T (Experience, Expertise, Authoritativeness, Trustworthiness) signals.

If you've ever wondered why an AI chooses to cite one website over another, the answer lies in how it calculates trust. Let’s demystify the algorithm and explore how you can build a digital footprint that AI platforms implicitly trust.

For over a decade, marketers relied heavily on third-party metrics to gauge website strength. It was common practice to ask how is domain authority calculated to understand a site's likelihood of ranking. While these traditional metrics still provide a helpful snapshot of backlink profiles and general page authority, they are inherently human-made proxies.

AI engines look at the world differently.

Algorithmic Trust Scoring is the dynamic, real-time process by which machine learning models evaluate the systemic credibility of a domain, an author, or a piece of content. Unlike traditional search algorithms that might have used authority as a "ranking booster" (nudging you from position 4 to position 2), modern AI treats trust as a binary gatekeeper.

If a piece of content does not pass the algorithmic trust threshold, the AI will simply not synthesize or cite it—no matter how perfectly keyword-optimized the text might be. AI systems are increasingly trained to avoid "hallucinations" and factual errors, meaning their primary directive is risk mitigation. They only want to pull from the most unassailably credible sources.

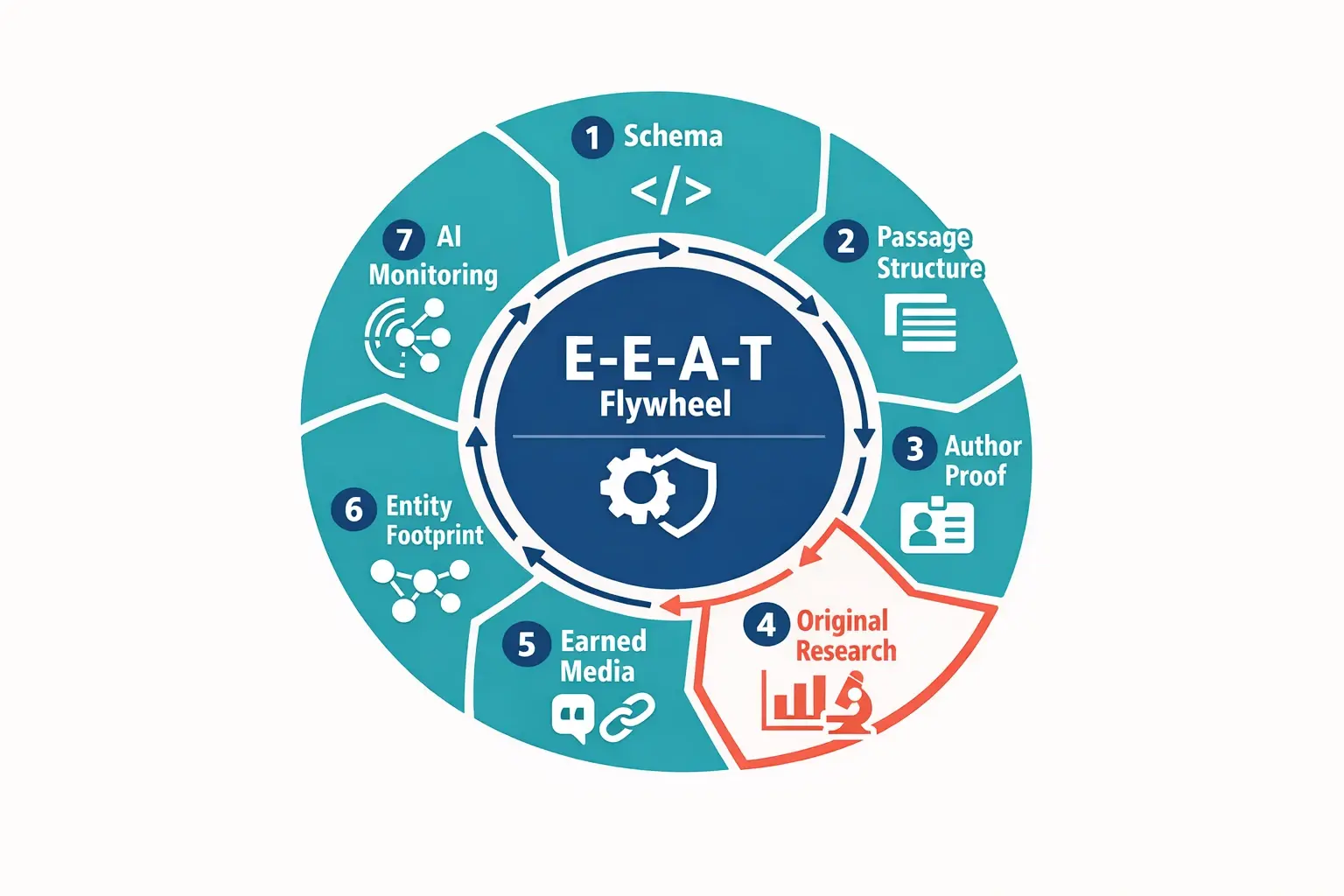

To understand what algorithms are looking for, we have to look at the bedrock of modern content evaluation: E-E-A-T. While Google originally introduced these guidelines for human Quality Raters, they have now become the foundational signals that machine learning models are trained to detect automatically.

Here is how AI interprets the four pillars of E-E-A-T:

AI algorithms are becoming incredibly adept at sniffing out generic, aggregated content. To prove Experience, the algorithm looks for machine-readable evidence of first-hand involvement. This includes original photography (verifiable via EXIF data), unique data sets, specific methodologies, and before-and-after analyses. It’s no longer enough to say "I tested this product"; you must provide structural proof that the algorithm can verify.

Expertise is about demonstrating comprehensive knowledge of a subject. AI evaluates this by analyzing your site's topical clusters and the semantic relationships between your pages. A well-structured internal link building strategy helps the algorithm map your expertise, showing that you don't just have one surface-level article, but a deep, interconnected library of advanced technical knowledge. It also heavily weighs author metadata—who actually wrote this, and are they recognized elsewhere online?

Authoritativeness is your reputation in your specific niche. AI determines this through "Entity Recognition." It scans the broader internet to see if your brand or authors are recognized as established entities in databases like Wikidata or Google’s Knowledge Graph. Furthermore, the algorithm assesses earned media and brand mentions seo—when high-tier publications talk about your brand naturally, it serves as a powerful validation of your authority.

Trust is the most critical component; if trust is low, the other three pillars collapse. Algorithms evaluate Trustworthiness through technical signals (secure connections, fast load times), clear editorial policies, transparent contact information, and accountability. As highlighted in recent Harvard Business Review studies, building "Trusted AI" requires transparency and accountability—and AI search engines demand the exact same things from the websites they cite.

It’s one thing to know what E-E-A-T is; it’s another to understand how an algorithm processes it. AI doesn't read a webpage from top to bottom like a human enjoying a novel. Instead, it breaks content down into vector embeddings—mathematical representations of meaning and context.

One of the most fascinating developments in AI search behavior is how it favors "micro-content." Large Language Models (LLMs) like ChatGPT or Google’s AI Overviews typically extract information in 150 to 300-word passages.

If your content answers a specific query comprehensively within a well-structured, self-contained passage, it is highly likely to pass the "Extraction Test." The algorithm grabs that specific block of text, assesses the trust score of the surrounding domain, and if both pass, you become the cited source.

Not all signals are created equal. Self-proclaimed expertise ("I am the best plumber in London") carries very little weight. Conversely, a dense entity footprint—where your business is linked to industry associations, local chambers of commerce, and high-quality editorial placements—carries immense weight.

To AI, verification is everything. Algorithms look for consistency across the web to ensure that the claims made on your website match the reputation you hold externally.

Understanding the theory is the first step, but how do businesses adapt to this reality? The most successful organizations run their digital strategy through an iterative process. If you want to start aligning your site with AI trust scoring, consider these fundamental steps:

Strictly speaking, Google and other search engines have stated that E-E-A-T is not a single, quantifiable ranking metric you can look up. However, it is a conceptual framework that informs many of the actual algorithmic signals used to rank and cite content. In the realm of AI citations, it acts more like a mandatory filter—if your trust signals are too low, you simply won't be considered for a citation.

Unlike tweaking a title tag and seeing a jump in rankings the next day, algorithmic trust is built over time. It typically takes months of consistent, high-quality content publication, earned media, and technical stability for an AI entity graph to update and fully recognize your brand as a trusted authority.

Increasingly, yes. AI analyzes linguistic patterns, depth of detail, and supporting metadata. If an article claims to review a complex piece of software but only uses generic descriptions found on the manufacturer's website—lacking unique screenshots, specific workflows, or nuanced critiques—the algorithm will likely flag it as lacking genuine Experience.

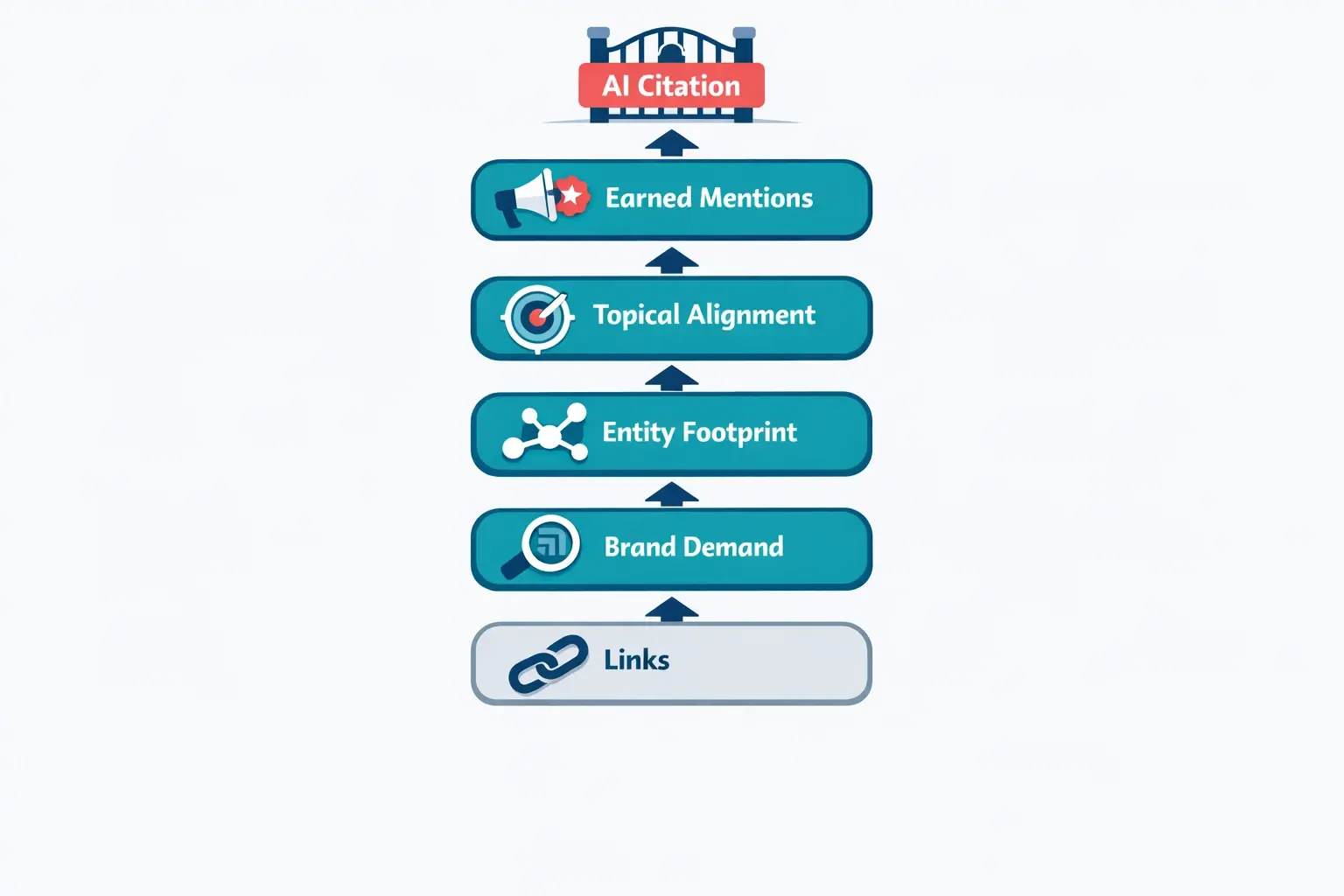

Yes, but the context has evolved. Backlinks are no longer just "votes" to push you up a numerical ladder. They are context-providers. A high-quality backlink tells the AI what you are an authority in and connects your brand entity to other trusted entities. Quality and relevance are more critical than ever.

The transition from traditional search to AI-driven discovery can feel overwhelming, but it is also a tremendous opportunity. Brands that take the time to understand Algorithmic Trust Scoring today are building moats around their digital presence that competitors will struggle to cross tomorrow.

Start by evaluating your own website objectively. Look at your most important pages and ask: Does this prove my experience? Is my expertise obvious? Am I making it easy for a machine to verify my brand's authority?

By shifting your focus away from chasing legacy metrics and toward building genuine, verifiable algorithmic trust, you align your business with the future of search. The algorithms are learning—make sure they're learning to trust you.